Post content:

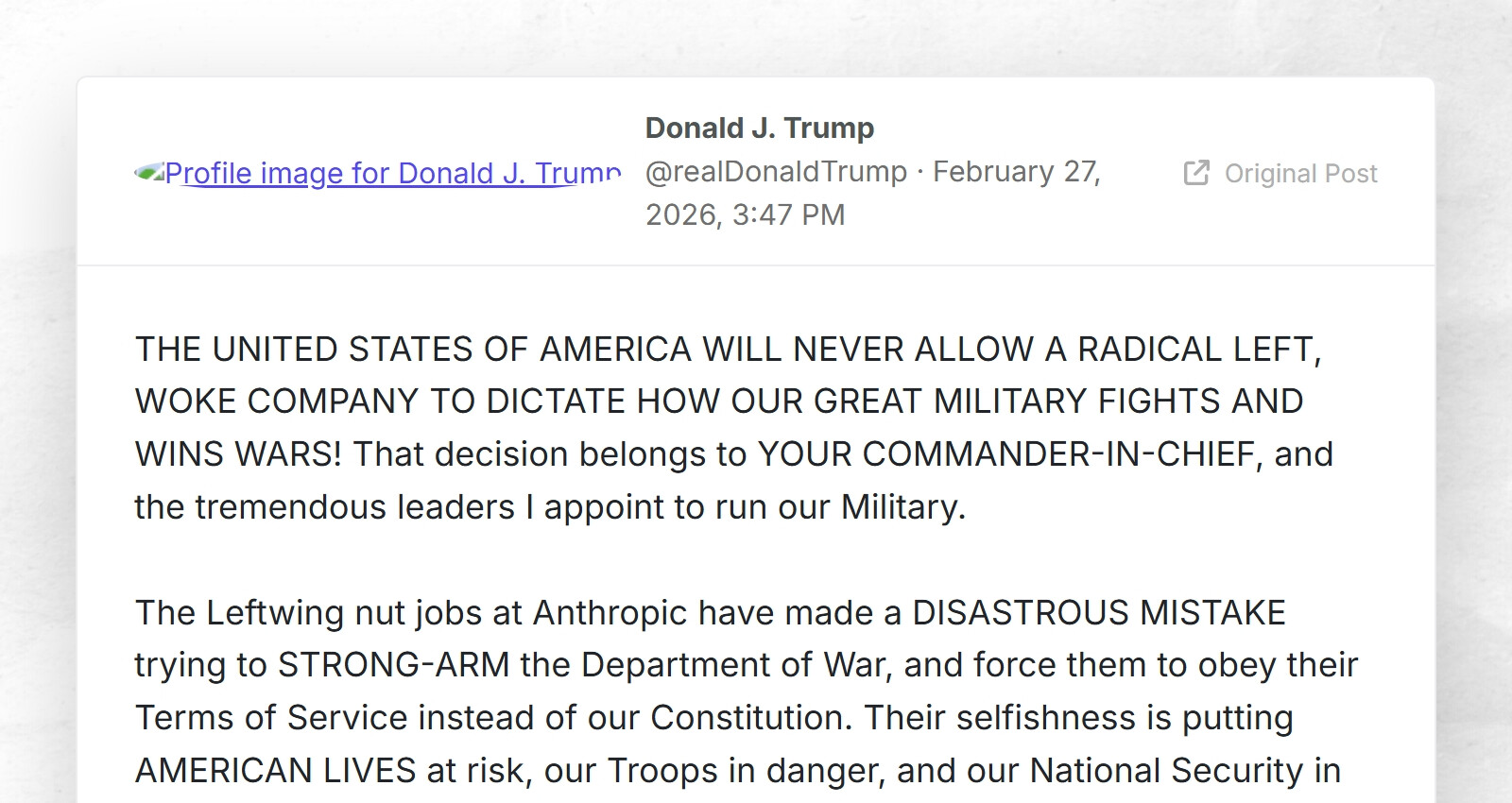

THE UNITED STATES OF AMERICA WILL NEVER ALLOW A RADICAL LEFT, WOKE COMPANY TO DICTATE HOW OUR GREAT MILITARY FIGHTS AND WINS WARS! That decision belongs to YOUR COMMANDER-IN-CHIEF, and the tremendous leaders I appoint to run our Military.

The Leftwing nut jobs at Anthropic have made a DISASTROUS MISTAKE trying to STRONG-ARM the Department of War, and force them to obey their Terms of Service instead of our Constitution. Their selfishness is putting AMERICAN LIVES at risk, our Troops in danger, and our National Security in JEOPARDY.

Therefore, I am directing EVERY Federal Agency in the United States Government to IMMEDIATELY CEASE all use of Anthropic’s technology. We don’t need it, we don’t want it, and will not do business with them again! There will be a Six Month phase out period for Agencies like the Department of War who are using Anthropic’s products, at various levels. Anthropic better get their act together, and be helpful during this phase out period, or I will use the Full Power of the Presidency to make them comply, with major civil and criminal consequences to follow.

WE will decide the fate of our Country — NOT some out-of-control, Radical Left AI company run by people who have no idea what the real World is all about. Thank you for your attention to this matter. MAKE AMERICA GREAT AGAIN!

PRESIDENT DONALD J. TRUMP

You have to realize, what exactly Anthropic refused: To extend the current contract to two things:

refusing those two things makes it to be the only AI company that is ““radical left””. Now Google, OpenAI or Grok will do what Anthropic has done already, plus mass surveillance plus AI-killcommands

It’s crazy how not only ethics but now safety is “radically left”

Yeah, one of the two was a pure safety play, not even ethics.

If I sell the military an ATV for shuffling things around on base, I might engineer a speed limiter to prevent the ATV from going faster than what its safety features are rated at. But a demand that I remove the governor so that the vehicle can go all lawful speeds totally misses the point. Whether it is illegal or unethical to do so, it’s still bad engineering to use dangerous technology beyond the scope of what it (and its safety features) has been designed for.

Well, extremists love to abuse the concept of context

At the very least, didn’t Sam Altman/OpenAI say they intend to have these exact same restrictions?

Well, there’s news about them being the replacement. So, no, they won’t.

aaand he gave up on them already 😂

Yeah, Sam Altman says a lot of things…

like that he didn’t rape his sister

Hahahahahahhahahhahahahahhahhaha

Sam Altman the infamous grifter? The same Altman whose foot in the door into the tech industry was inflating user numbers to get his app sold? That Sam Altman?

I don’t want to paraphrase him out of context, so here is his full statement:

“I don’t personally think the Pentagon should be threatening DPA against these companies. But I also think that companies that choose to work with the Pentagon, as long as it is going to comply with legal protections and the few red lines that the field, we have, I think we share with Anthropic and that other companies also independently agree with, I think it is important to do that. For all the differences I have with Anthropic, I mostly trust them as a company, and I think they really do care about safety, and I’ve been happy that they’ve been supporting our warfighters.”

Just listened to the “Search Engine” episode about anthropic that I think came out today? I am happy they stuck to their guns, but also, yeah google, open AI, grok, whatever, will happily do whatever crimes against humanity

Yeah, it’s going to be Grok. Who wouldn’t want an autonomous Mecha-Hitler killing things?

And Anthropic refused? Surely they must be the evil ones!